Deploying a Nested Lab Environment with vRA - Part 1

Over the last weeks I've worked on building out a vRA blueprint with lots of vRO workflows that deploys a nested vSphere lab environment.

I'll go through the project in this blog series. This is the first part and will cover the underlying environment and vRA configuration. Part 2 will cover the vRA blueprint and part 3 the vRO workflows that runs the extensibility. Part 4 will summarize the status of the project, discuss a few bugs/querks and describe some of the future plans

The project

One of the use cases for this project is to provide my colleagues a way to spin up a vSphere lab, test out or demo something, and then destroy it with no trace of what happened inside. Kind of like the VMware Hands-On-Labs (HOL).

We'll have the nested environments running as separate "islands" in our existing lab environment so that there could be multiple environments running independent of eachother and the underlying resources. To be able to do this we need to separate and automate the networking and what better way to do this than with NSX-T.

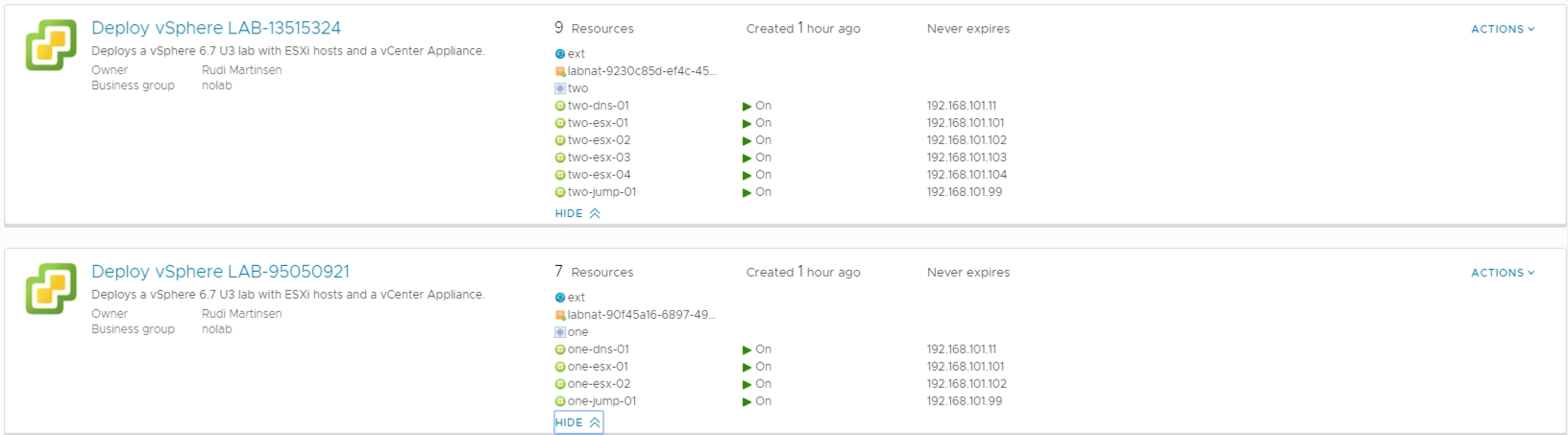

As you can see in a screenshot from the environment there's two deployments both using the same network segment and IP addresses, but they're completely separated.

This is built in vRealize Automation 7.6 and we're deploying vSphere 6.7 U3 resources. Even though vRA 8.0 is released I find that the customers I support is still heavily into 7.6 and will not upgrade/move in quite a while.

For deploying a VCSA and configuring the different components I'm using vRO workflows as opposed to Software Components which could be an alternative.

Also I'm still quite new to vRA so I wanted to expand my knowledge and experience in 7.x. Finally I think it will be a nice scenario for a migration of this solution from 7.6 to 8.x when we're ready for that.

Resources

Before we dive into the solution I want to list the following blogs that I've either used code from or used as guides/inspiration.

- virtuallyghetto.com (William Lam), Nested Virtualization

- virtualinsanity.com, Build your very own VMware Hands-On-Labs!

- virtualizestuff.com, vRA: Developing a NSX-T Blueprint – Part1

- VMware documentation, Add an NSX-T On-Demand NAT or NSX-T On-Demand Routed Network Component

A quick overview of the physical environment

In our lab environment we have two (well actually four, but for this setup we're only using two) vCenters, one for management and one for workloads. In the management vCenter we have deployed NSX manager and vRA.

All of the nested lab environments will be deployed to the workload vCenter which has five physical ESXi hosts. Of course you could have both NSX/vRA in the same vCenter as your nested environment.

I'm not very proficient in NSX-T, the setup in our lab has been done by one of my team mates, Nils Kristiansen.

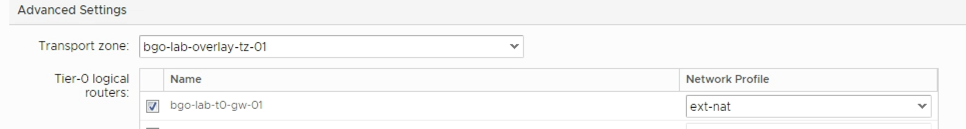

In NSX-T we have two transport zones configured, one VLAN and one Overlay (we will use the Overlay transport zone in our setup). There's also an NSX Edge cluster configured with two Edge hosts. Finally we have a T-0 Gateway which our on-demand T-1 routers will connect to later on.

vRA configuration

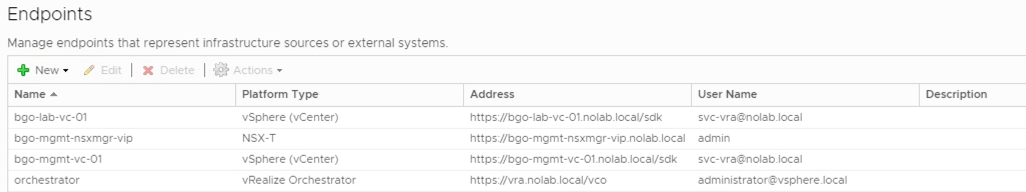

In vRA I have set up an endpoint to each of the vCenters and to the NSX manager. The NSX manager needs to be associated to the vCenters (technically it is sufficient to assosiacte it with the vCenter deploying the resources)

\

Compute Resources and reservations

We also need Fabric groups, Compute Resources and reservations like for "normal" VM deployment. I won't go through all of that in this post. Actually I'm using the existing vRA reservations for now. If we see that we need to limit or use specific resources we have the ability to change this later on.

Network profiles

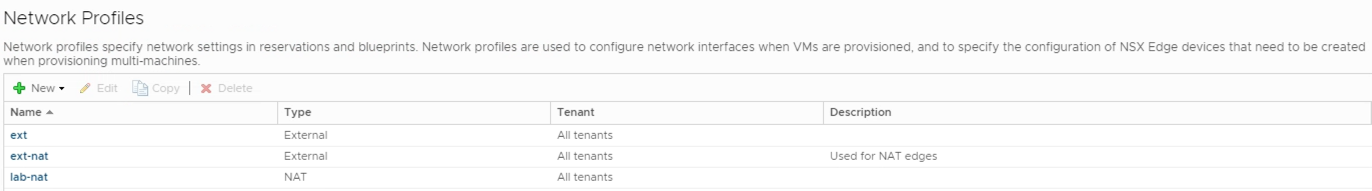

I struggled a bit with the network profiles, but ended up with the following:

\

One external network profile, ext, which is the "normal" network profile for deployment of resources in the environment. In this environment it has no network ranges configured, it's just making use of the DHCP server on the network. Our Jump host will use this for one of it's network adapters

One external network profile, ext-nat, which is used for the NSX NAT edges. Here I've set a network range and reserved some IP addresses on the external network. Each of the deployments will have one NSX edge (T-1 router) configured which will use this network. I'm pretty sure I could have combined the ext and ext-nat networks if I had configured network ranges in the first, or letting the DHCP fix the addressing. For now I want to specify the address pool my self hence the specific profile

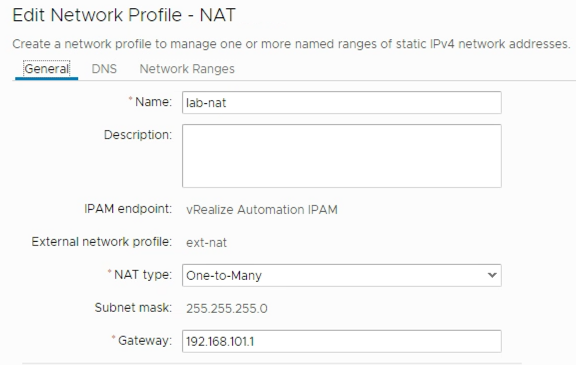

One NAT (one-to-many) network, lab-nat, which will create the logical NSX network. This is connected to the ext-nat network so it knows where to connect the NSX T-1 router. In this profile I specify the details of the logical NAT network. I'm using a 192.168.101.0/24 network for all deployments. And all of the deployed resources will be connected to this network

\

\

Finally the Reservation(s) to be used needs to be updated with the correct network profile. I'll let the "normal" network profile be configured to the correct portgroup so I'm only specifying which network profile the T-0 gateway will use, namely the ext-nat profile described above

Summary

That's a wrap on this part which talked about the background for the project, the underlying environment and the vRA configuration. In the next part we will build the project in vRA so stay tuned!