vROps Management Pack for Kubernetes

Overview

In this post we'll take a look at the vRealize Operations Management Pack for Kubernetes

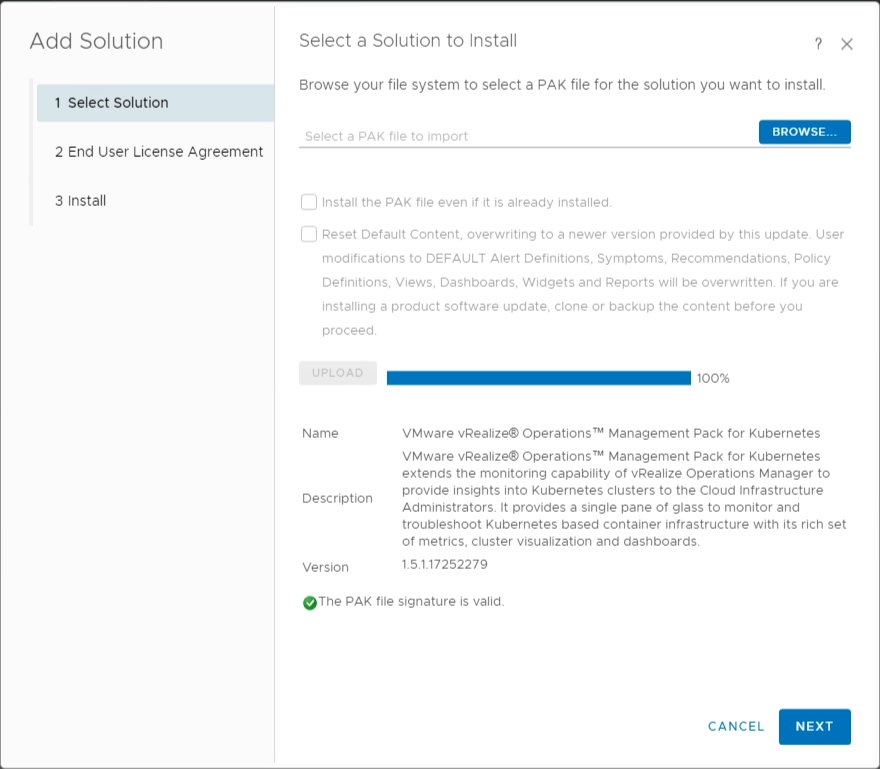

Currently it ships in version 1.5.1, and our vRealize Operations Manager is running 8.2. Note that I'm referring to the on-premises version of vROps in this post.

The management pack supports monitoring, troubleshooting and optimizing capacity management for Kubernetes clusters. In addition it has a few other promising capabilities

- Auto-discover Tanzu Kubernetes Grid Integrated (TKGI, formerly PKS) and Tanzu Mission Control (TMC) Kubernetes clusters (Note! Currently only AWS clusterss are supported through the TMC integration)

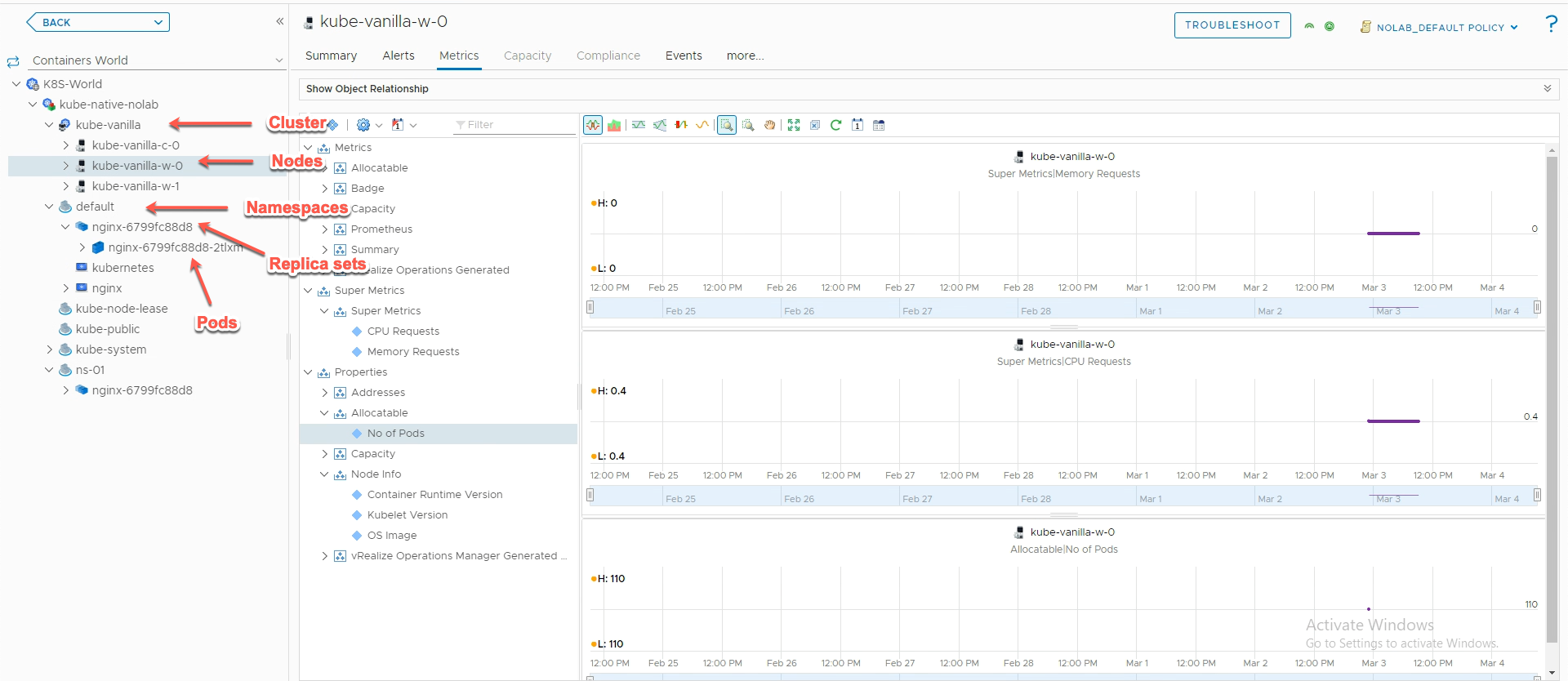

- Visualization of Kubernetes cluster topologies, including Namespaces, Replica sets, Nodes, Pods and Containers

- Performance Monitoring

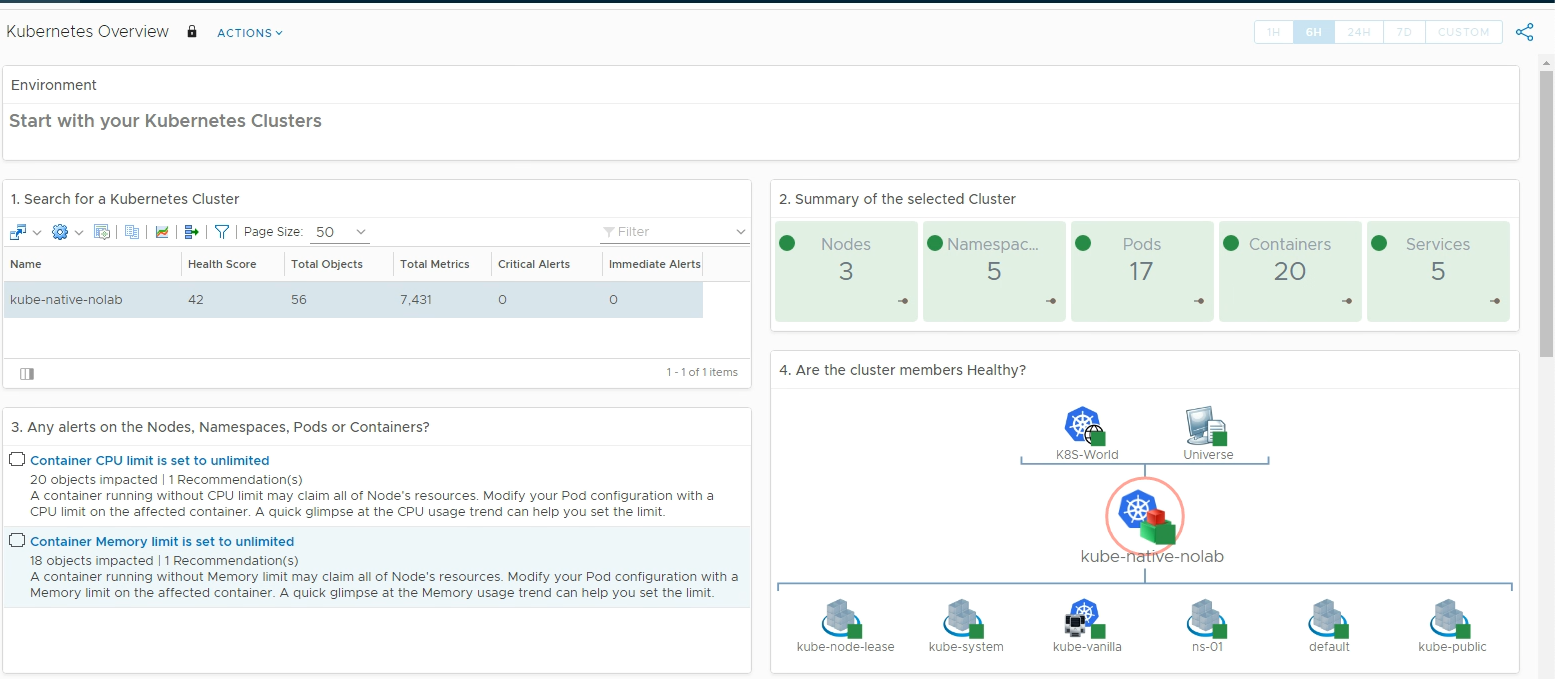

- Inventory dashboards for Kubernetes clusters

- Alerts for Kubernetes clusters

- Mapping Kubernetes nodes to virtual machines

- Reporting on capacity, configuration and inventory of clusters or pods

Sounds interesting, let's take a closer look!

Installing

The Management pack can be downloaded from the VMware Marketplace. Link to the 1.5.1 version

The management pack is provided for free, but you need to login to the Marketplace to be able to download it

After downloading the file we'll head over to our vROps instance to import it.

Management packs are imported from the Administration->Repository view, and by hitting the Add/Upgrade button. Browse to, and select your downloaded pak file, and hit the Upload button

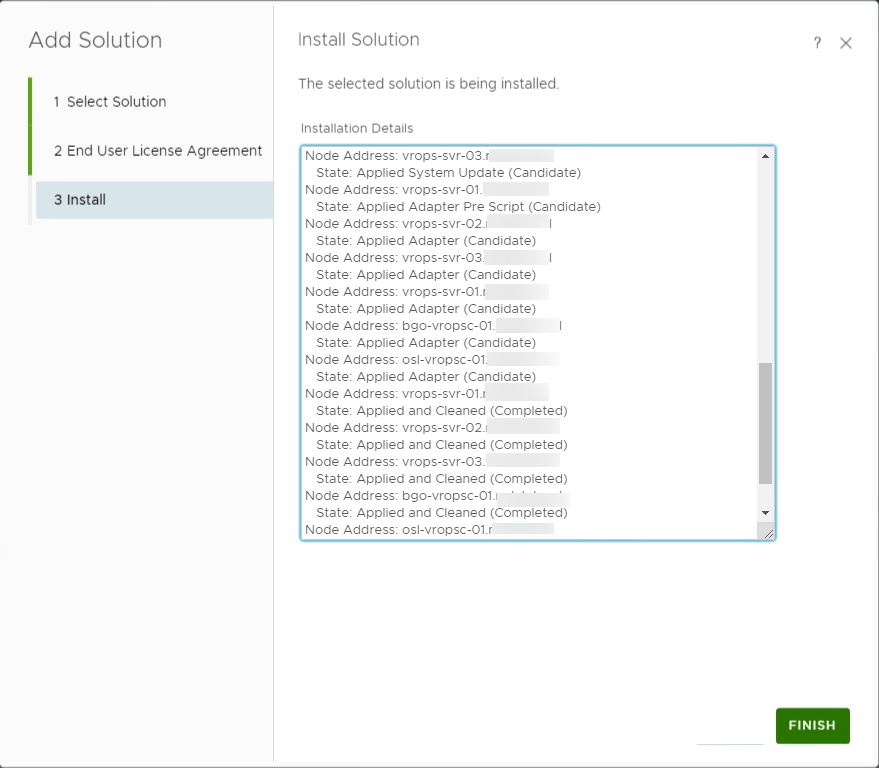

After the file has been uploaded we can click Next, read through and accept the Eula. Click Next to Install the management pack. After a short while the management pack should be installed on all the nodes in the cluster

Configuring

Now that the Management has been installed, we can go ahead and configure it. Note that vROps won't start monitoring stuff inside Kubernetes itself, it needs a monitoring endpoint to connect to. We can think of this like we do for VMs inside vCenter. vROps connects to the vCenter SDK and pulls the metrics that vCenter is pulling from the hosts and VMs running inside.

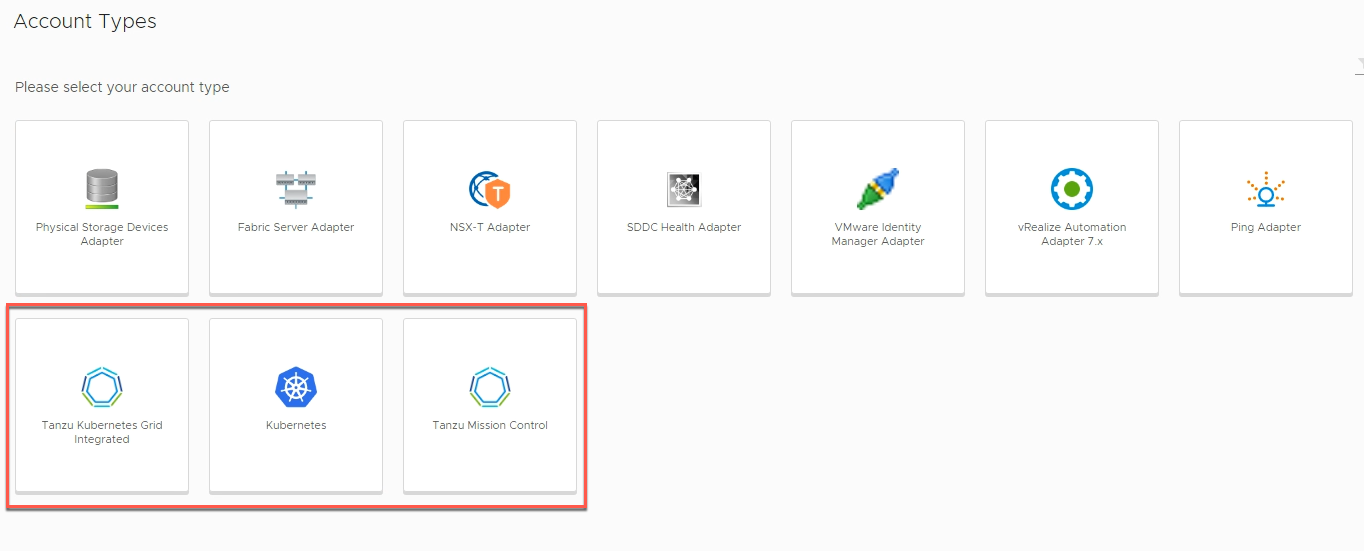

The Management Pack can connect to native Kubernetes clusters as well as TKGI and TMC managed clusters. I'll take a look at Native clusters and TMC in this post.

Connect vROps to a Kubernetes cluster using Prometheus

vROps supports using either cAdvisor or Prometheus as the service to connect to a Kubernetes cluster.

There is a couple of posts out there that goes through using the cAdvisor approach and since I already have a Prometheus server running in my environment configured to scrape a native Kubernetes cluster we'll use that to connect vROps to.

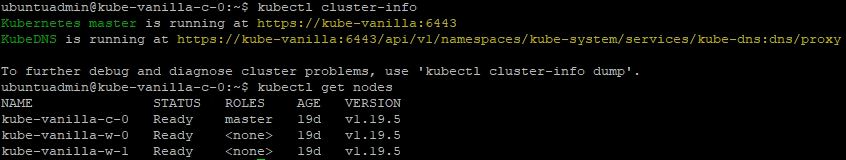

Connecting vROps to a Kubernetes cluster is done by setting up accounts in the Other Accounts view

Add account

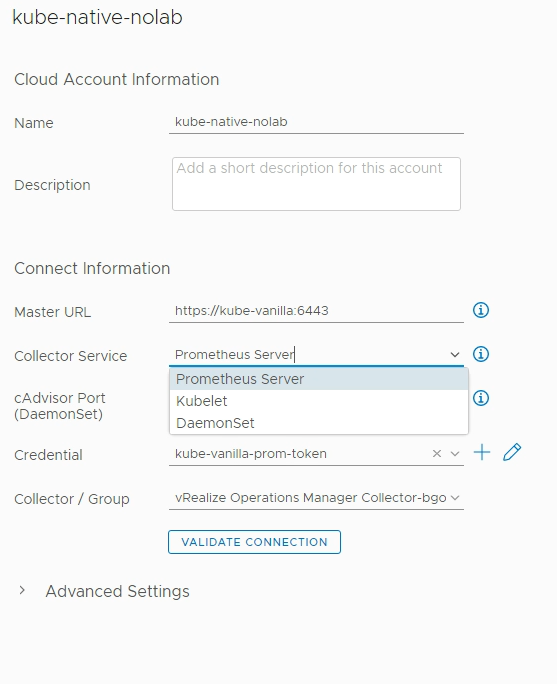

I'll specify the address to the Kubernetes server, select the Prometheus Server collector service and add in the credential.

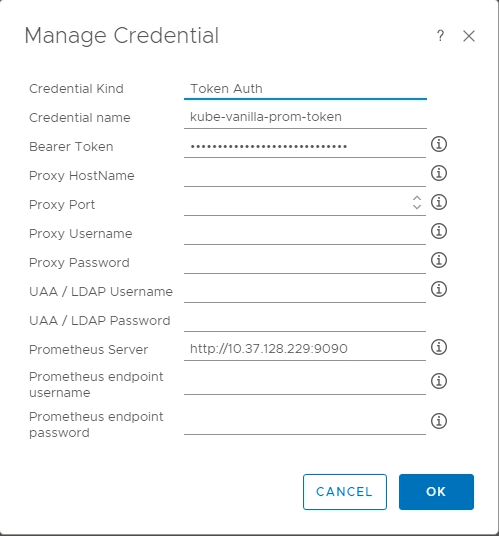

The credential object is using the Token based auth, and I need to specify the address to my Prometheus server. The documentation also specifies that we need to add in the username and password for the Prometheus server, but I haven't configured that on Prometheus so I'll skip that.

I've also specified which collector/group I want fetching the data.

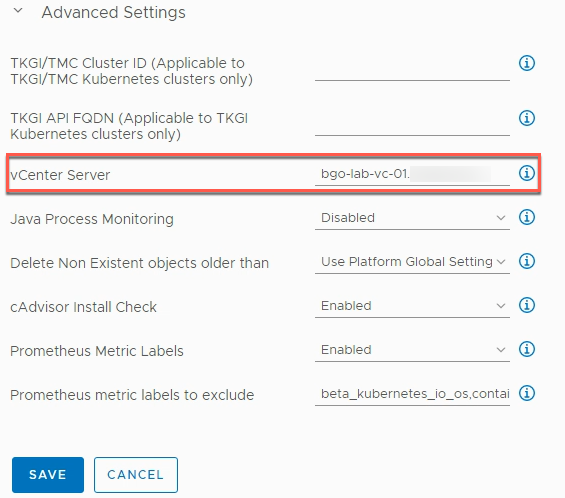

In the Advanced settings we can also add in the vCenter server details which let's vROps correlate the Kubernetes nodes to VMs which is a nice feature

Now with all this in place I hit the Validate connection button and accept the Untrusted certificate and hopefully data should start to be collected.

In my environment I now get a warning stating that there's something wrong with accessing the URL

I tried multiple variants of the URL, but without luck. In the end I just left it, and when I later checked the accounts page the account says Ok

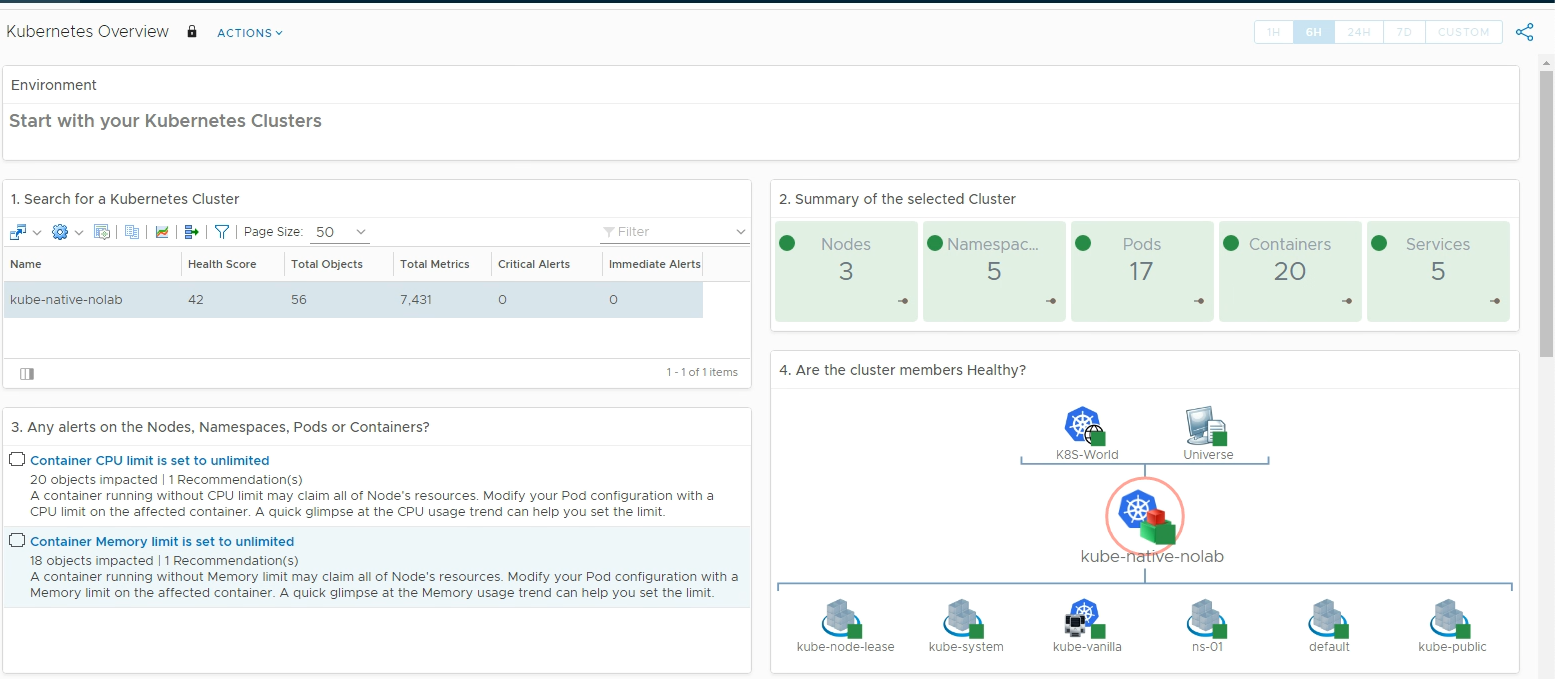

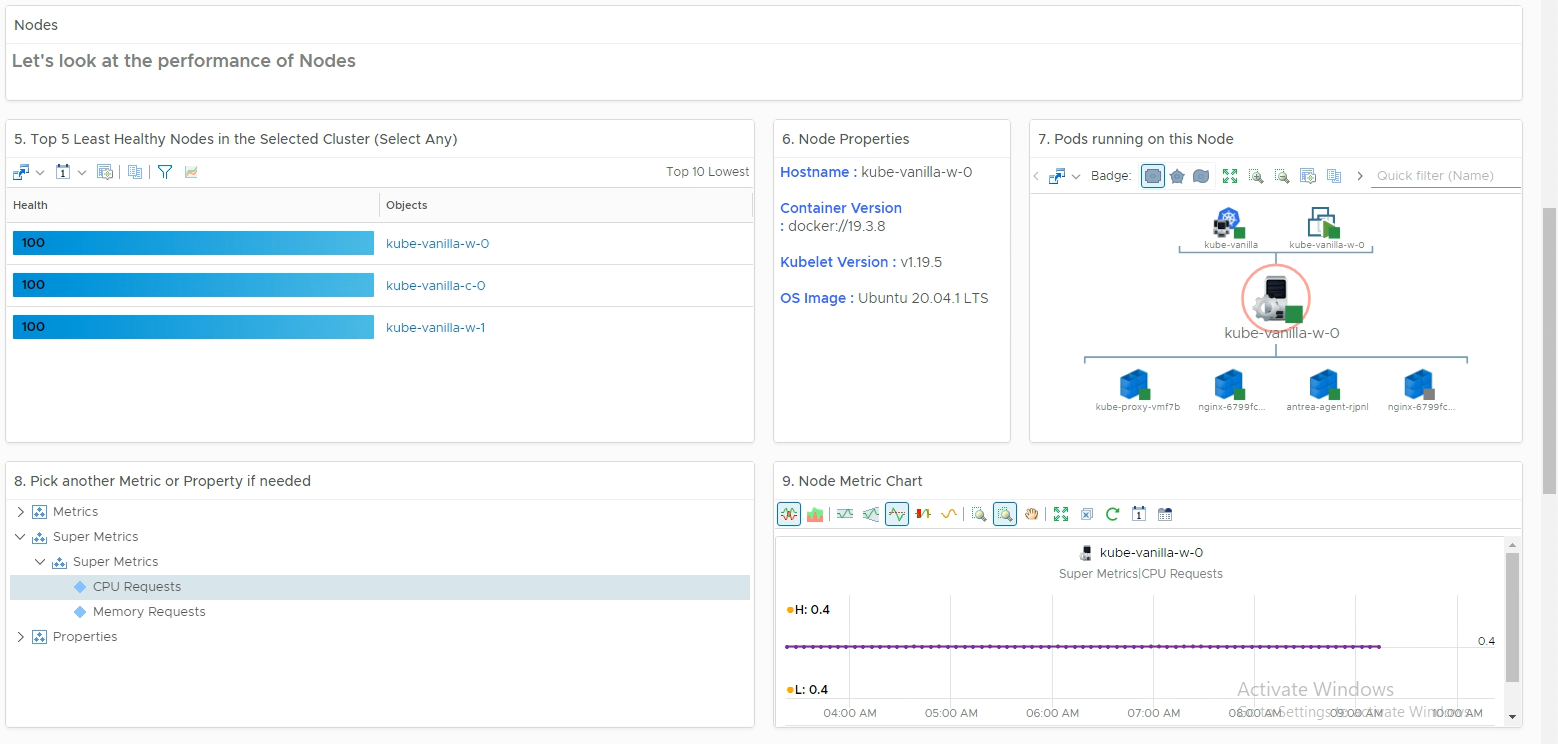

Environment data and Dashboards

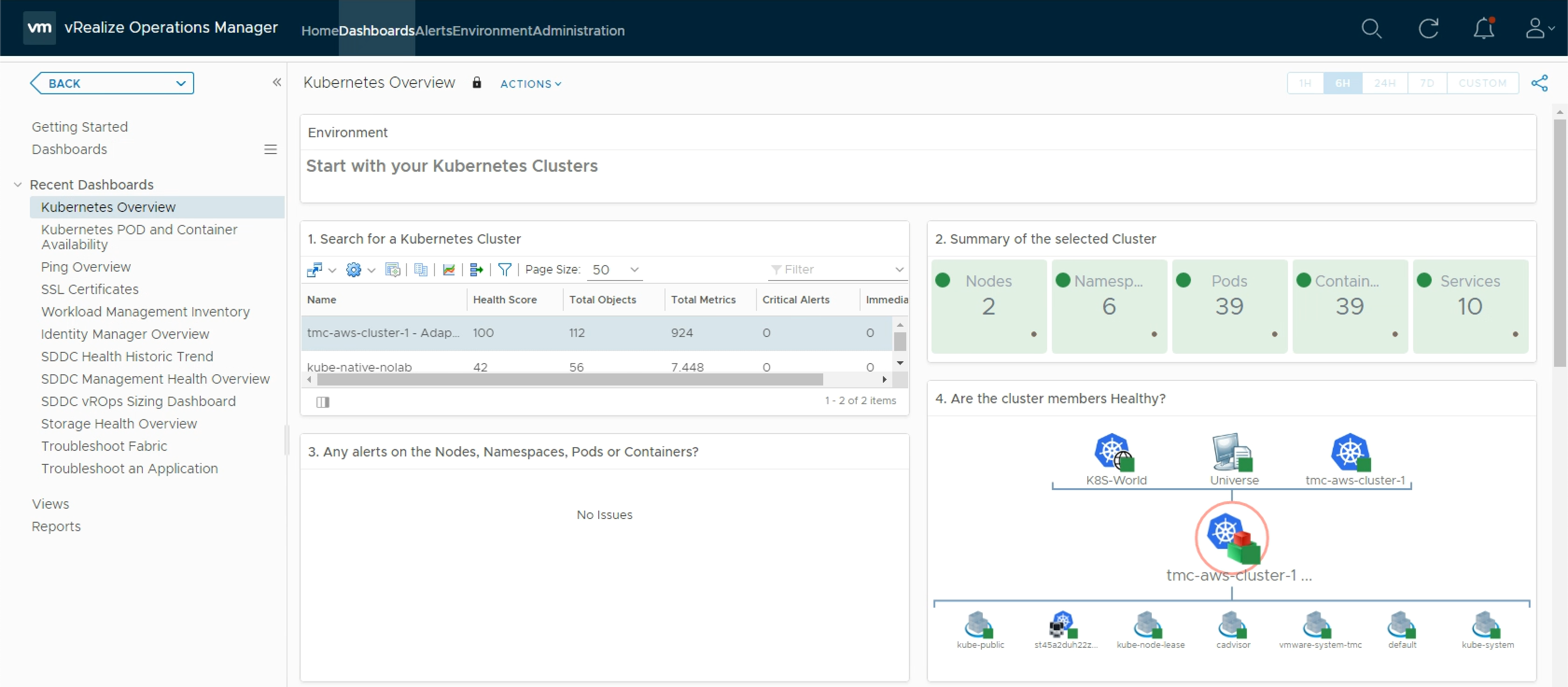

With this data in place we should start getting some data in our Environment, and the Dashboards provided by our Management Pack should come to life

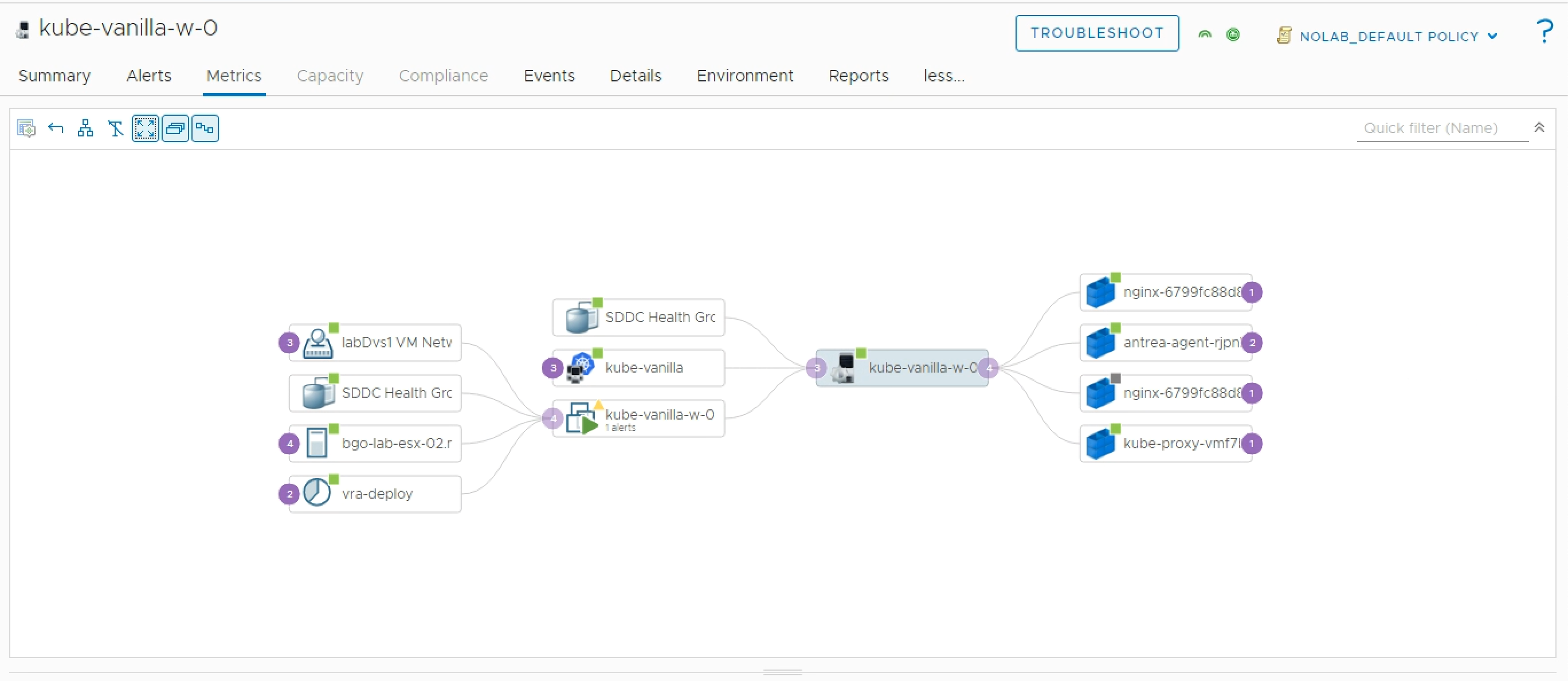

Correlate with VI workloads

Since this cluster is running in my vCenter environment that vROps are connected to I can also correlate with the VI workloads

Below is a screenshot of a Node with it's object relationship both to Pods on the right hand side, as well as the Virtual environment with e.g. it's VM and ESXi relations on the left

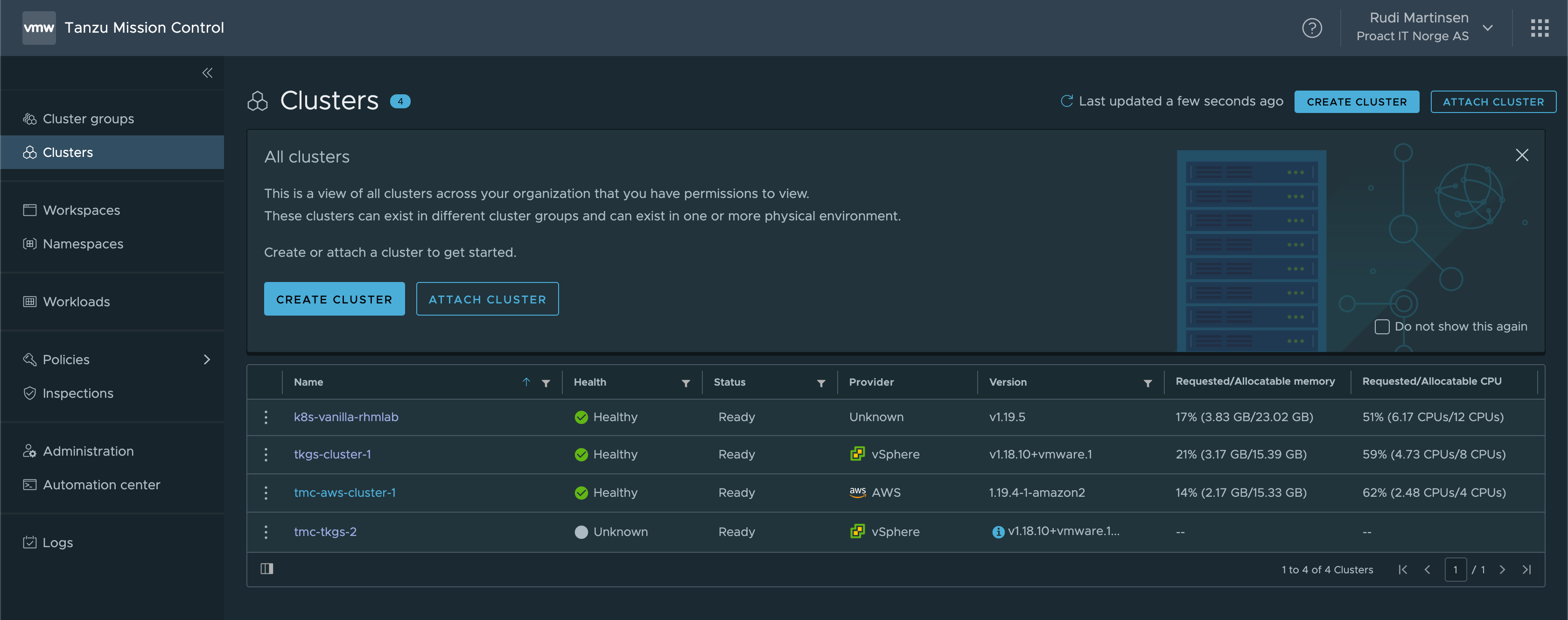

Connect vROps to Tanzu Mission Control

I've written a few blog posts on Tanzu Mission Control (TMC) already, be sure to take a look at those if you're new to it.

Now let's see how we can integrate this with TMC. The integration promises to automatically create Kubernetes adapters for the clusters we have connected to TMC which is kind of neat.

Currently, in version 1.5.1 TMC only supports integrating with Tanzu Kubernetes Clusters running on AWS. This is noted in the Release Notes for version 1.5.1, but not as a requirement in the documentation. I spent quite some time on troubleshooting this before I learned this

In my TMC I have one AWS Tanzu Kubernetes Cluster so we'll see how TMC can pick that one up.

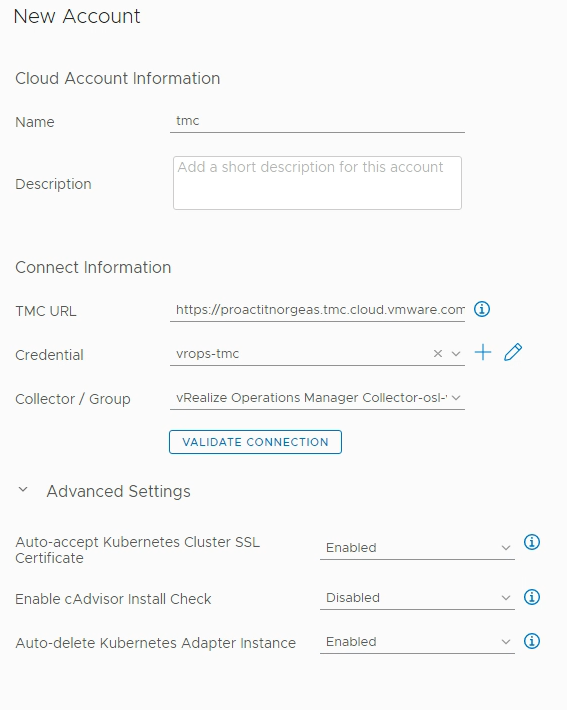

I'll add a new account for the TMC adapter

In the new account view I'll add in the details for the TMC connection, including the URL and the credential. Note that you need to have, or preferably create a new, API token for the connection. In the Advanced settings we can decide if we want to accept untrusted certificates, and if we want to auto-delete Kubernetes adapters

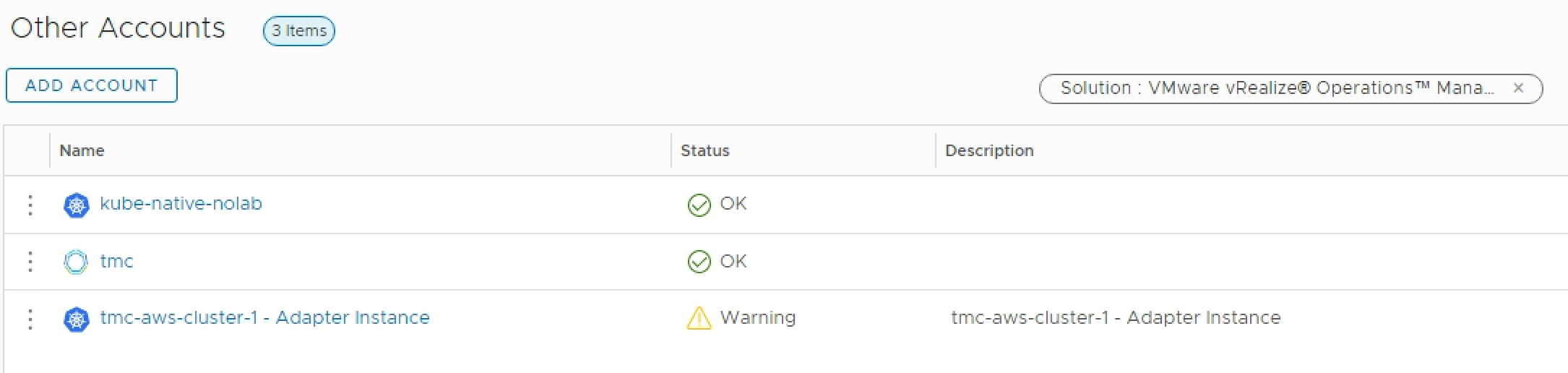

After a little while the Data collection should fetch the data about our TMC cluster(s) and create a new adapter for us

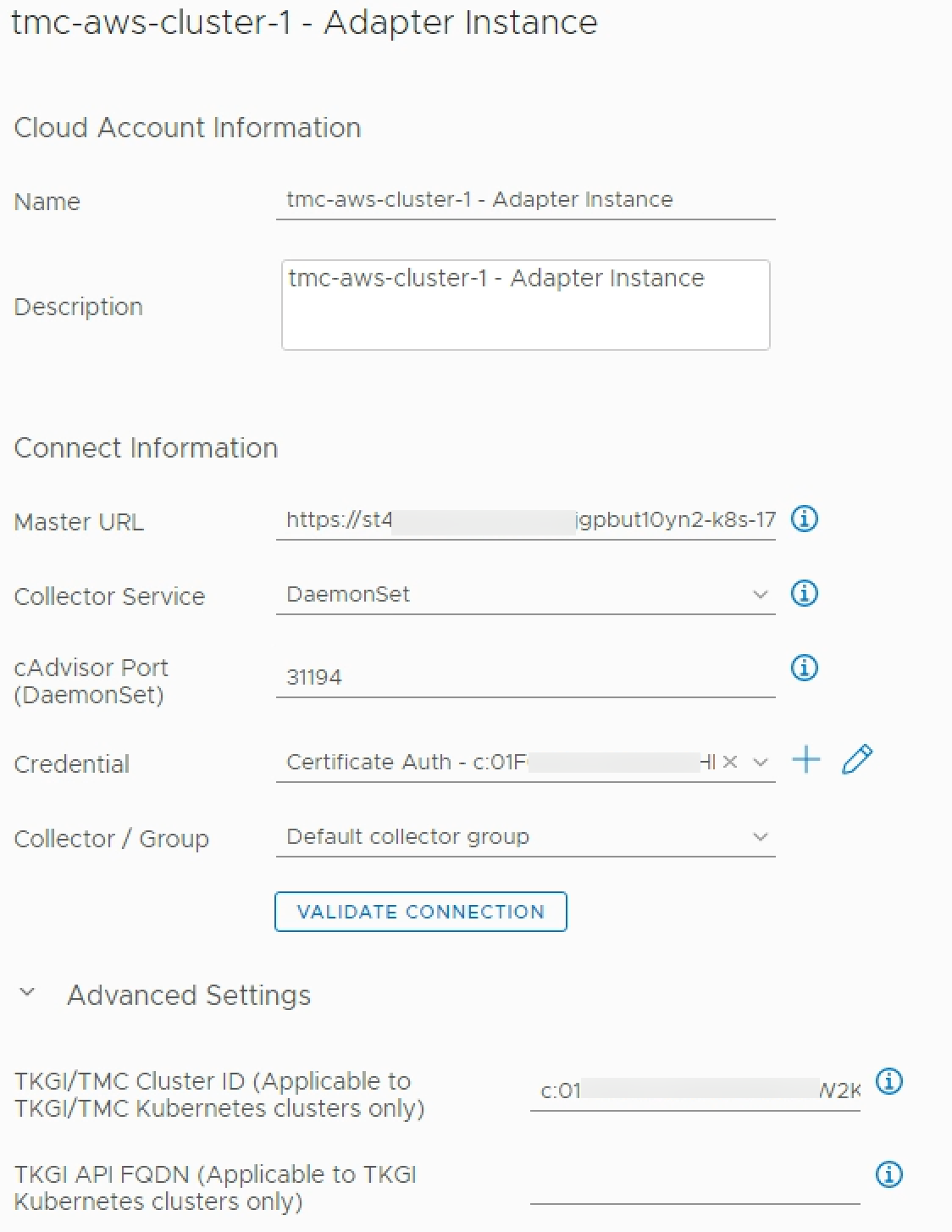

Note that per the Management pack documentation the clusters running here must have the cAdvisor daemon set configured on port 31194.

cAdvisor daemon set

We´ll add in the cAdvisor daemon set as per the documentation. Sample definitions for configuring this is available in the Management pack documentation

In my cluster I also added a cAdvisor service account and created a role with a rolebinding for it to run, based on the examples on cAdvisor's GitHub.

With this set up our vROps should be updated with data about our Tanzu cluster running in AWS

Summary

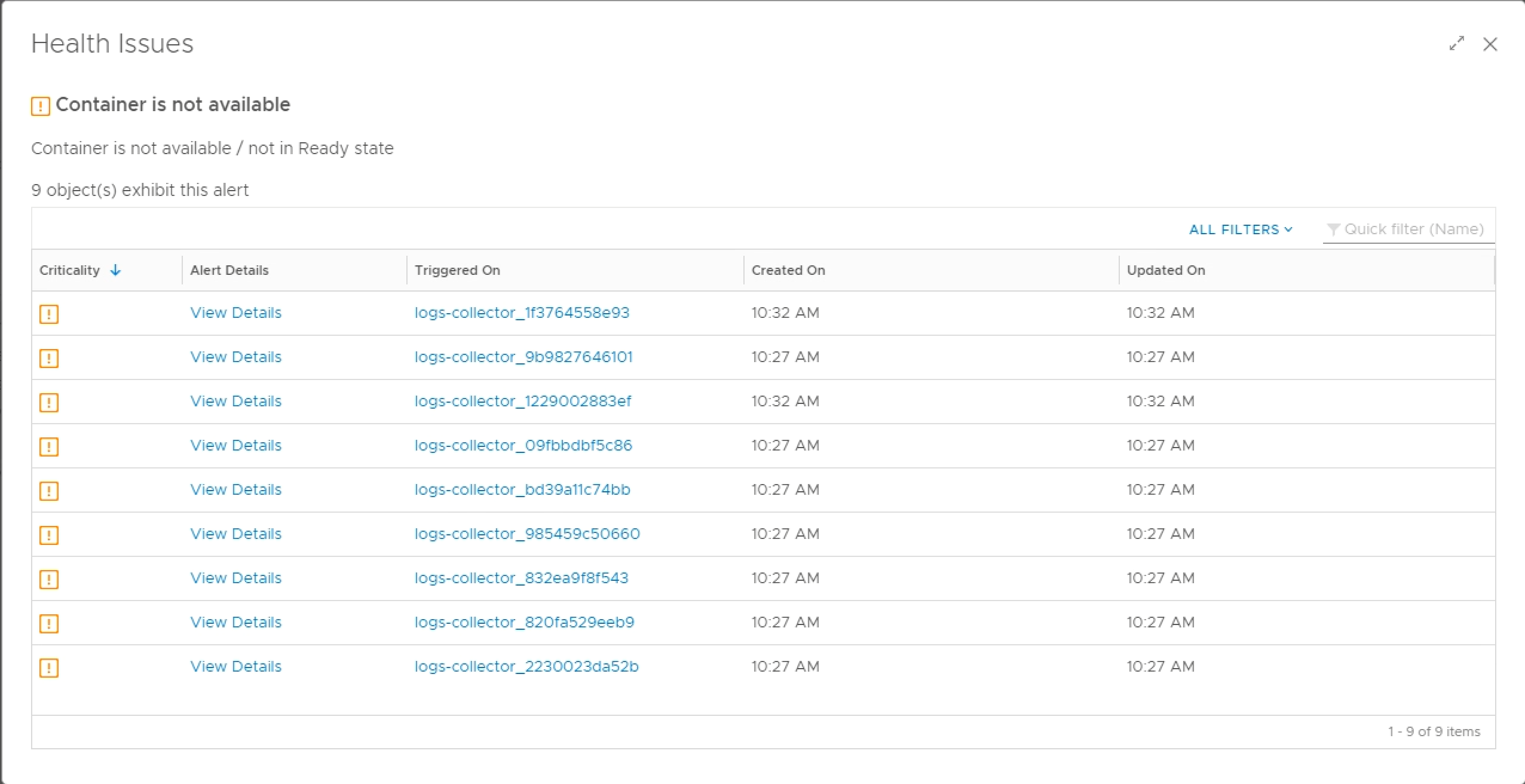

With just a couple of clusters added to vROps we can see that there is some value in the solution. To be able to get details about the clusters, issues, alerts and monitoring like the Issue list below in the same solution is quite nice.

I really hope that the TMC integration will support Tanzu Kubernetes clusters running on other cloud providers in the near future. The ability to automatically create (and delete) Kubernetes adapters is really nice.

Thanks for reading!