CKA Study notes - Daemon Sets vs Replica Sets vs Deployments

Overview

This will post will be part of my Certified Kubernetes Administrator series and will cover the concepts of Daemon Sets, Replica sets and Deployments, and the differences between them.

Update january 2024: This post is over three years old and some things might have changed. An updated version of the post can be found here

Note #1: I'm using documentation for version 1.19 in my references below as this is the version used in the current (dec 2021) CKA exam. Please check the version applicable to your usecase and/or environment

Note #2: This is a post covering my study notes preparing for the CKA exam and reflects my understanding of the topic, and what I have focused on during my preparations.

Concepts

Kubernetes Documentation reference

First a few notes on different concepts when it comes to workloads in Kubernetes.

We have Pods which is the smallest component Kubernetes schedules. A pod can consist of one or more containers. Pods can be part of a Daemon Set or a Replica Set which can control how and where they are run (more on this later in this post). Pods and Replica Sets can be a part of a Deployment.

Note that even though we say a Pod "can be a part of" or "exist in" or even "controlled by" they are actually their own master and can live on their own. You can create a Pod without a Daemon-/Replicaset or a Deployment and you can even delete one of the latter without deleting the pods they previously managed.

A Pod is connected to a Daemon-/Replicaset or a Deployment by matching on its selectors. This means you can start with a Pod, and after a while you can add it to a Replicaset if you want to

Both Daemon Sets, Replica Sets and Deployments are declarative, meaning that you describe how you want things to be (e.g. I want a Replica set containing two of these Pods), Kubernetes will make it happen (e.g. deploy two Pods matching the PodTemplate in the specified Replica set)

Daemon sets

Kubernetes Documentation Reference

A Daemon set is a way to ensure that a Pod is present on a defined set of Nodes. Oftentimes that will probably mean all of your nodes, but it could also be just a subset of nodes.

Typical usecases might include running a Pod for log collection on all nodes or a monitoring pod. Or maybe you have different hardware configuration on your nodes and you want to make that available to a specific set of pods, e.g. GPUs, flash disks etc

A Daemon set will automatically spin up a Pod on a Node missing an instance of said Pod. E.g. if you are scaling out a cluster with a new node.

To run a Pod only on a select set of nodes we use the nodeSelector or the affinity parameters. reference

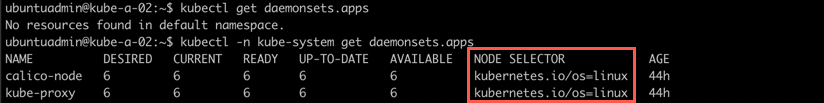

To see an example of a Daemon set we can check our default kube-system namespace

1kubectl -n kube-system get daemonsets

Both the kube-proxy and my network component Calico is running pods in Daemon sets

Notice the Node selector column there which is what the Daemon sets seems to use to know which Nodes the pods should run on.

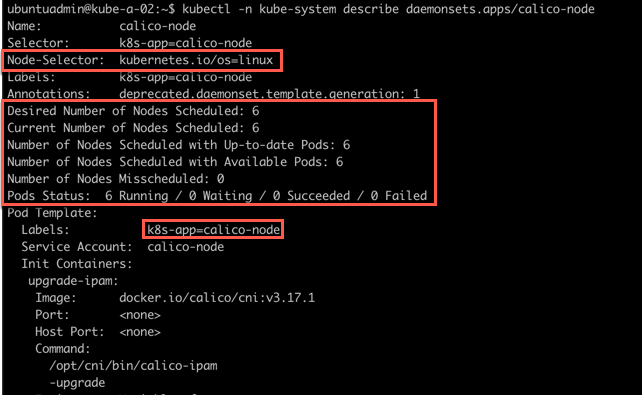

We can verify this by checking the Daemon set spec

1kubectl -n kube-system describe daemonset/calico

Here we can see that the NodeSelector is infact the one we saw from the kubectl -n kube-system get ds output. Also notice that the daemon set keeps track of both the desired number of nodes and the current status.

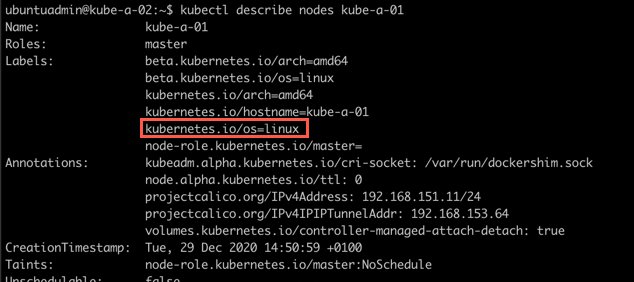

Let's check the config of one of our nodes to check it's labels

1kubectl describe node kube-a-01

Here we can verify that this node in fact has a label matching the NodeSelector used in the Daemon set config

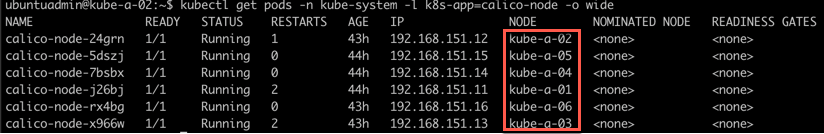

From the Daemon set config we also saw the label used for the Pods so let's try to check which nodes the pods are running on. We'll use that label we found to filter the output

1kubectl get pods -n kube-system -l k8s-app=calico-node -o wide

As we can see there's one calico-node Pod running on each node

Replica sets

Kubernetes Documentation Reference

A Replica set is used to ensure that a specific set of Pods is running at all times. Kind of like a watch dog.

The Replica set can contain one or more pods and each pod can have one or more instances. Meaning you can create a Replica set containing only one Pod specifying to run only one instance of that Pod. The use case for this is that the Replica set will ensure that this one Pod always runs, and it also enables scaling mechanisms if you later on need to scale out that pod.

As mentioned previously a Pod can be added to a Replica set after its creation (you can create the Pod first and have a new Replica set "discover" the Pod), the Replica set will find its pods through the selector

Deployments

Kubernetes Documentation Reference

Finally let's look at Deployments.

In newer versions of Kubernetes a Deploymentis the preferred way to manage pods. A Deployment actually uses Replica Sets to orchestrate the Pod lifecycle. Meaning that instead of manually creating Replica Sets you should be using Deployments.

Deployments adds [to a replica set] the ability to do rolling updates and rollback functionality amongst other things.

Let's see a few quick examples

Create a deployment

Kubernetes Documentation reference

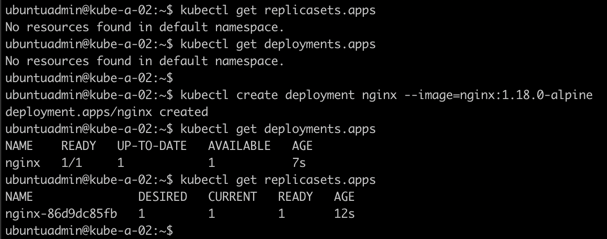

First we'll verify that there's no Deployments or Replica sets in our default namespace. Then we'll create a Deployment and see what happens

1kubectl get replicasets

2kubectl get deployments

3

4kubectl create deployment nginx --image=nginx:1.18.0-alpine

5

6kubectl get deployments

7kubectl get replicasets

From this we can see that our new Deployment actually created a Replica set!

Scale a deployment

Kubernetes Documentation reference

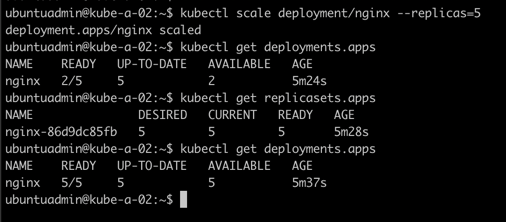

Now we'll scale up the deployment to 5 replicas

1kubectl scale deployment/nginx --replicas=5

2

3kubectl get deployments

4kubectl get replicasets

Nice. We have scaled our deployment and that instructed the replica set to provision four more pods.

There's more scaling options available in a Deployment, both Autoscaling and Proportional scaling. Check the documentation for more

Now let's take a quick look at updates and finally rollbacks

Update a deployment

Kubernetes Documentation reference

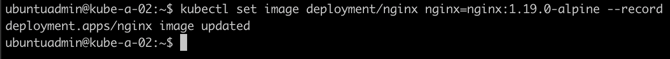

We'll change the nginx image version used from 1.18.0-alpine to 1.19.0-alpine and add the --record flag to the command

1kubectl set image deployment/nginx nginx=nginx:1.19.0-alpine --record

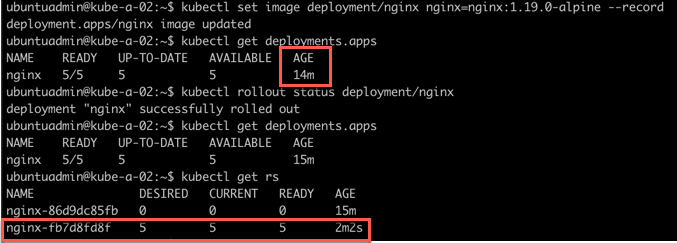

Let's check the status of our deployment

1kubectl get deployments

2kubectl rollout status deployment/nginx

3kubectl get rs

Interestingly we notice that our deployment looks the same at first sight. We verify the rollout status which says it's finished. When we then look at the Replica set we can see that it was actually deployed a new Replica set with pods running the new image.

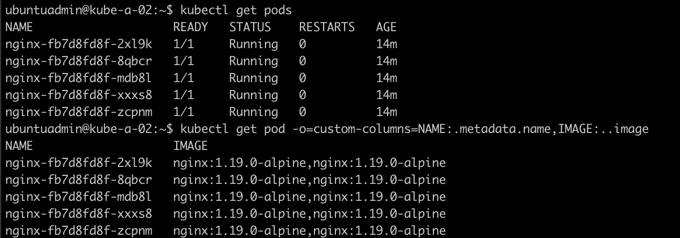

Let's check one of the pods just to make sure the new image is used

1kubectl get pods

2kubectl get pods -o=custom-columns=NAME:.metadata.name,IMAGE:..imag

Rollback a deployment

Kubernetes documentation reference

So, let's say that this version broke something in our application and we need to roll back to the previous version.

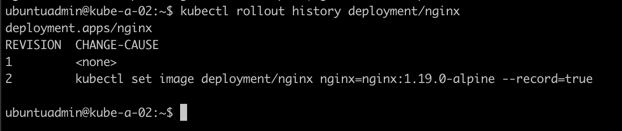

First let's check the rollout history

1kubectl rollout history deployment/nginx

Now if I want to rollback to the previous verson I'll run the rollout undo command. Note that we could also specify a specific revision with the --to-revision flag

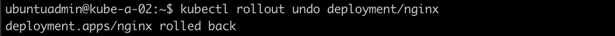

1kubectl rollout undo deployment/nginx

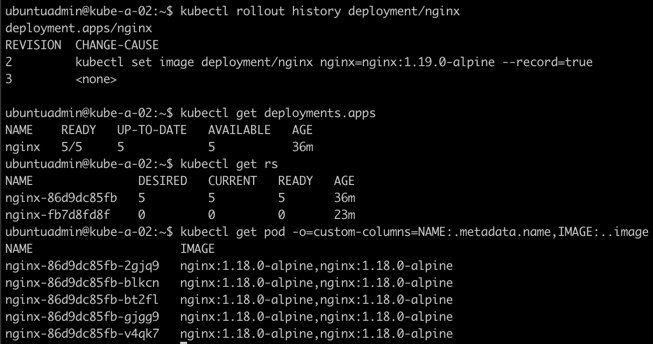

Let's take a closer look at what happened

1kubectl rollout history deployment/nginx

2kubectl get deployments

3kubectl get rs

As we can see we have a new rollout version. Interestingly we can see that there wasn't created a new Replica set, Kubernetes did actually just scale down the version running the 1.19 image to 0 replicas (pods) and then scaled up the old version running on 1.18 to five replicas.

Finally we verify that the pods are infact running the 1.18.0-alpine image.

Control a rolling update

Kubernetes Documentation reference

This section was added on 2021-01-05

To control how a (rolling) update of a deployment is done we can use maxUnavailable and maxSurge. Other important parameters are minReadySeconds and progressDeadlineSeconds

This parameter configures how many replicas can be unavailable during a deployment. This can be defined as a percentage or a count. Say you have 4 replicas of a pod and set the maxUnavailable to 50%, the deployment will scale down the current replica set to 2 replicas, then scale up the new replica set to 2 replicas, bringing the total to 4. Then it will scale down the old replica set to 0 and finally up the new replica set to 4.

Setting a higher value will speed up the deployment at the cost of less availability, likewise a lower value will ensure more availability at the cost of rollout speed.

maxSurge defines how much more resources you allow the deployment to use. As with maxUnavailable you can define the value in percentage or a count.

Say you allow 25% maxSurge. In our previous example this would allow the deployment to deploy two extra pods, bringing the total up to 6, during the rollout.

This can speed up the deployment at the cost of additional used resources.

This parameter isn't specific to deployment updates/rollouts. It tells Kubernetes how long to wait before it checks if the pod is ready and available. In a rolling deployment this is important so that Kubernetes doesn't roll out too quickly and risking the new pods crashing after killing the old ones.

This parameter can be used to time out a Deployment. Consider a deployment with pods crashing and never gets ready, this is something that should be looked at and you need the deployment to fail and e.g trigger an alert, instead of the deployment trying to keep retrying indefinetely.

By default this parameter is specified to 600 seconds (10 minutes). If the deployment doesn't make progress (a pod gets created or deleted) inside this value it will time out the deployment.

Summary

This has been a quick overview of the differences between Daemon sets, Replica sets and Deployments. There's obviously much more to the concepts than I've covered in this post. I urge you to check out the Documentation for more info, and test things out on your own.

Thanks for reading, and if you have any questions or comments feel free to reach out